Federated Learning System

Published:

We use the vertically federated learning as an example to introduce the architecture of the federated learning system and to explain the detailed process of how it works.

Architecture for a Federated Learning System

In this section, we use the vertically federated learning as an example to introduce the architecture of the federated learning system and to explain the detailed process of how it works.

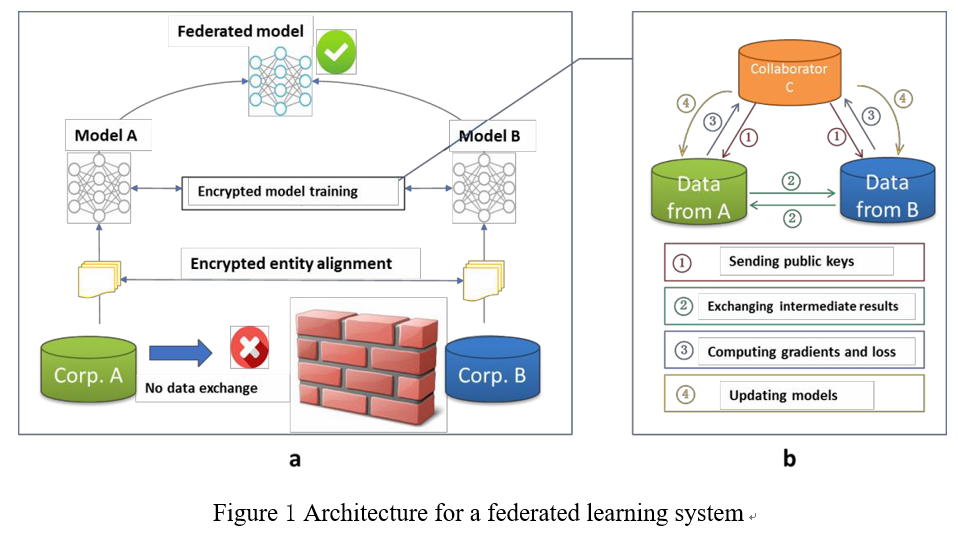

First, let’s take the scenario of two data owners (i.e, companies A and B) as an example to introduce the architecture of the federated learning system, which can be extended to scenarios involving multiple data owners. Suppose that companies A and B want to jointly train a machine learning model, and their business systems each have their own data. In addition, Company B also has label data that the model needs to predict. For data privacy and security reasons, A and B cannot directly exchange data. At this point, the model can be built using the federated learning system, which consists of two parts, as shown in Figure 1a.

Part 1: Encrypted entity alignment. Since the user groups of the two companies are not the same, the system uses the encryption-based user ID alignment technology to confirm the common users of both parties without A and B exposing their respective data, and the system does not expose users that do not overlap with each other.

Part 2 : Encrypted model training. After determining the common entities, we can use these common entities’ data to train the machine learning model. In order to ensure the confidentiality of the data during the training process, it is necessary to use a third-party collaborator C for encryption. Taking the linear regression model as an example, the training process can be divided into the following four steps (as shown in Figure 1b):

- Step ①:collaborator C creates encryption pairs, send public key to A and B;

- Step ②:A and B encrypt and exchange the intermediate results for gradient and loss calculations;

- Step ③: A and B computes encrypted gradients respectively, and B also computes encrypted loss; A and B send encrypted values to C.

- Step ④:C decrypts and send the decrypted gradients and loss back to A and B; A and B update the model parameters accordingly.

Iterations through the above steps continue until the loss function converges, thus completing the entire training process. During entity alignment and model training, the data of A and B are kept locally, and the data interaction in training does not lead to data privacy leakage. Therefore, the two parties achieve training a common model cooperatively with the help of federated learning.

Part 3: Incentives Mechanism. A major characteristic of federated learning is that it solves the problem of why different organizations need to jointly build a model. After the model is built, the performance of the model will be manifested in the actual applications and recorded in a permanent data recording mechanism (such as blockchain). Organizations that provide more data will be better off, and the model’s effectiveness depends on the data provider’s contribution to the system. The effectiveness of these models are distributed to parties based on federated mechanisms and continue to motivate more organizations to join the data federation.

The implementation of the above three steps not only considers the privacy protection and effectiveness of collaboratively-modeling among multiple organizations, but also considers how to reward organizations that contribute more data, and how to implement incentives with a consensus mechanism. Therefore, federated learning is a “closed loop” learning mechanism.